Tesla has pushed Full Self-Driving (Supervised) v14.3 to owners via an over-the-air update, shipping inside software version 2026.2.9.6. The release notes, published on NotATeslaApp and flagged by Tesla reporter Sawyer Merritt on X, is one of the larger FSD point releases in recent memory. The headline change is a ground-up rewrite of the AI compiler and runtime using MLIR (Multi-Level Intermediate Representation), which Tesla claims produces 20% faster reaction time and speeds up future model iteration. That is a measurable claim attached to a specific architectural decision, not a vague software polish pass.

The update also touches parking behavior, small animal handling, traffic light logic at complex intersections, rare-object detection, and the system’s ability to recover from temporary degradations without driver intervention. Three additional improvements are listed as upcoming, not yet shipped.

The MLIR Compiler Rewrite Is the Structural Change Here

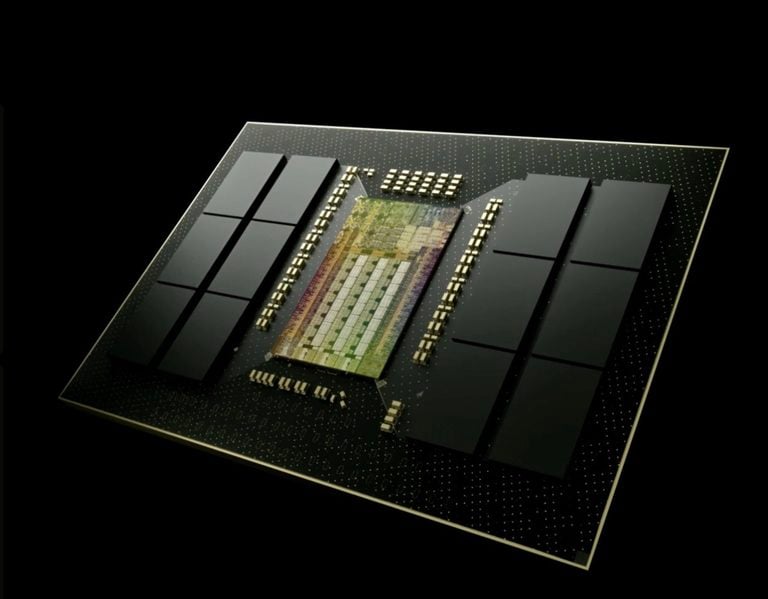

Tesla rewrote the FSD AI compiler and runtime from scratch using MLIR, an open-source compiler infrastructure developed at Google and now hosted under the LLVM project, where it is widely used in machine learning frameworks. The claimed result is a 20% improvement in reaction time and faster iteration speed for future model training. Tesla’s release notes do not specify whether the 20% figure is measured against v14.2 or an earlier baseline, and no independent third-party verification is available at the time of publication.

This follows software update 2026.2.9, which shipped FSD v14.2.2.5 and included functional changes to the neural network vision encoder alongside the Navigate on Autopilot rename to Navigate on Autosteer. V14.3 takes that vision encoder work further, with Tesla citing improved understanding in rare and low-visibility scenarios, stronger 3D geometry recognition, and expanded traffic sign reading.

Upgrading the underlying compiler is not a routine patch. MLIR allows Tesla’s engineers to optimize neural network operations across different hardware targets more efficiently, which matters especially as the fleet spans HW3 and HW4 vehicles. Faster model iteration means Tesla can push trained improvements to production more quickly, which in theory tightens the feedback loop between fleet data and on-road behavior.

What Actually Changed on the Road

Beyond the compiler rewrite, v14.3 brings a set of targeted behavioral fixes. Tesla’s release notes, as published on NotATeslaApp, list the following changes shipped in this version:

- Improved parking location pin prediction, now displayed on the map with a P icon

- Increased decisiveness in parking spot selection and maneuvering

- Upgraded Reinforcement Learning (RL) stage of FSD neural network training, covering a wider range of driving scenarios

- Upgraded neural network vision encoder for rare and low-visibility scenarios, 3D geometry, and traffic sign understanding

- AI compiler and runtime rewritten with MLIR: 20% faster reaction time (Tesla’s claimed figure, unverified), faster model iteration

- Reduced unnecessary lane biasing and minor tailgating behaviors

- Enhanced response to emergency vehicles, school buses, right-of-way violators, and other rare vehicles

- Improved small animal handling via harder RL training examples and proactive safety rewards

- Better traffic light handling at complex intersections with compound lights, curved roads, and yellow light stopping — trained on hard RL examples from the Tesla fleet

- Improved detection of rare or unusual objects extending, hanging, or leaning into the vehicle path, sourced from infrequent fleet events

- Improved handling of temporary system degradations, maintaining control and recovering automatically without driver intervention, reducing unnecessary disengagements

Several of these address edge cases that have shown up in NHTSA investigations and crash reports. Tesla’s own crash data filed with federal regulators showed the robotaxi fleet crashing at four times Tesla’s own internal benchmark and up to nine times the NHTSA standard for human drivers in Austin. Improvements to emergency vehicle response, rare object handling, and intersection behavior are exactly the categories that matter in that context.

Three Features Are Listed as Coming, Not Here Yet

Tesla’s release notes include an “Upcoming Improvements” section with three items that are not part of v14.3. Owners should not expect these in the current build:

- Expanded reasoning to all behaviors beyond destination handling

- Pothole avoidance

- Improved driver monitoring system sensitivity with better eye gaze tracking, eyewear handling, and higher accuracy in variable lighting conditions

The driver monitoring improvement is worth watching. NHTSA has scrutinized Tesla’s driver attention systems repeatedly. More accurate gaze tracking in variable lighting conditions directly addresses one of the documented failure modes in inattentive-driver incidents.

Pothole avoidance is a practical addition that has appeared in competitor driver-assistance systems for several years. It is not an advanced autonomy feature; it is road surface response. That Tesla is listing it as upcoming rather than shipped suggests the detection confidence threshold is not yet where they want it.

Context: Where v14.3 Fits in Tesla’s FSD Arc

V14.3 arrives as Tesla is pushing FSD internationally. The Netherlands approval process for FSD (Supervised) was in its final stage as of March 20, with the RDW (Rijksdienst voor het Wegverkeer, the Dutch vehicle authority) communicating an expected approval date of April 10. A more capable v14.3 build, with improved reaction times and vision encoder performance, strengthens Tesla’s regulatory case in markets that will scrutinize real-world performance data.

Domestically, Tesla’s California robotaxi service continues to operate under a limousine permit rather than a standard autonomous vehicle license, with no CPUC reporting requirements. The FSD software improvements in v14.3 do not change that regulatory situation, but they give Tesla’s safety narrative more substance to work with.

The FSD v14 line also carries some real-world weight beyond spec sheets. Earlier this year, FSD v14.1.3 drove a man to the ER during a STEMI heart attack, after his son remotely shared the destination through the Tesla app. That was not a designed use case, but it demonstrated the system handling an unscripted, high-stakes situation. V14.3’s improvements to rare scenario handling and system degradation recovery add to that foundation.

EVXL’s Take

The MLIR rewrite is the real story in v14.3, and it is easy to miss inside a long bullet list. Rewriting a compiler from scratch is not a feature you ship to check a box. It is infrastructure work that pays off over many subsequent releases. If Tesla’s 20% reaction time claim holds up in independent testing, that is a meaningful safety margin in the scenarios where FSD has historically struggled most: sudden obstacles, rare vehicles, degraded conditions.

I have not yet driven a vehicle running v14.3. Before calling the reaction time improvement verified, I want to see real-world disengagement data from NHTSA filings after several weeks of fleet-wide operation, not just Tesla’s internal claim. The company has a consistent pattern of quoting improvement percentages that sound impressive but resist direct benchmarking. The MLIR architecture is legitimate and publicly documented. The 20% figure needs time to prove itself.

The broader pattern here connects directly to what we covered in Tesla’s 2026.2.9 update: Tesla is iterating on FSD faster in 2026 than in any prior year. V14.2.2.5 shipped in early March. V14.3 is already out. The cadence is accelerating, and that matters more than any single version number. It shows Tesla closing the loop between fleet data, training, and deployment at a pace its competitors cannot currently match on a vehicles-already-on-road basis. By Q3 2026, v14.3’s compiler architecture will likely look like a foundation release rather than a feature drop. That is when the real performance delta becomes visible.

EVXL uses automated tools to support research and source retrieval. All reporting and editorial perspectives are by Haye Kesteloo.

Discover more from EVXL.co

Subscribe to get the latest posts sent to your email.